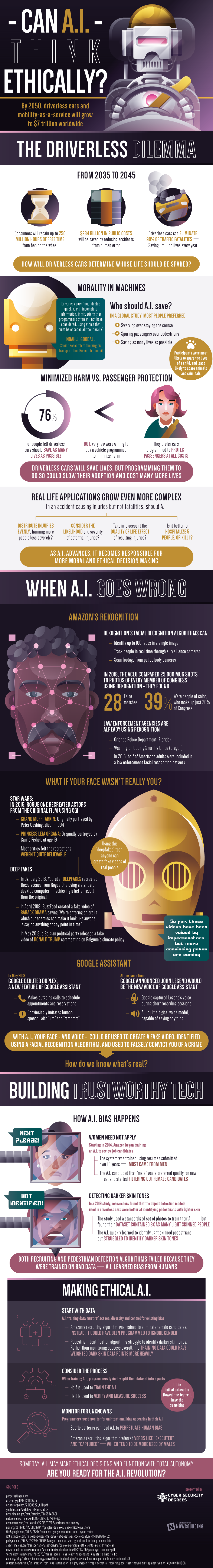

Technology is becoming a more and more prevalent part of our lives by the day, and pretty soon driving will fall under one of many things under their control. By 2050, driverless cars and mobility as a service will be estimated to grow to $7 trillion all across the world. From 2035 to 2045 it’s expected that consumers will regain up to 250 million hours of free time that otherwise would be spent on driving. $234 billion in public costs will be saved by reducing accidents and damage to property from human error, and driverless cars can eliminate 90% of all traffic fatalities – saving over 1 million lives every year. But if people are no longer behind the wheel, how will A.I. make those decisions that we make every time we are on the road? And even if it can decide these decisions, can they do it in an ethically acceptable way?

In a global study, most people preferred that the A.I. should swerve rather than staying the course, sparing passengers rather than saving pedestrians, and people want A.I. to save as many lives as possible. Participants also said that they wanted the A.I. to spare the life of a child as much as possible, and were least likely to spare the lives of pets or criminals. 76% of people felt that driverless cars should save as many lives as possible but very few were willing to buy a vehicle programmed to minimize harm – rather they wanted a driverless car that is programmed to protect passengers above all other people and property. Driverless cars will save a huge number of lives by cutting down distracted driving statistics, but programming them to do so in a way that people will like could slow their adoption and cost many more lives.

Real life applications of ethical A.I. can grow to be even more outlandish and complex, with the many different variables that prevail in our day to day lives. And as A.I. advances, it becomes more and more responsible for more moral and ethical decision making.

A.I. has yet another flaw – A.I., just like people, can make mistakes. Amazon’s Rekognition was a face identifier – it’s algorithms identify up to 100 faces in a single image, it can track people in real time through surveillance cameras, and can scan footage from police body cameras. But in 2018, the ACLU compared 25,000 mug shots to photos of every member of Congress using Rekognition but in doing so they found 28 false matches – 39% of which were people of color which make up just 20% of Congress.

Making ethical A.I. is quite difficult for programmers due to people’s preferences with driverless cars. But there are solutions that can help build trustworthy tech such as evaluating the data that is being used to build the A.I. and monitoring for unintentional biases.

Find out if A.I. sometimes can’t be trusted and if people don’t accept A.I.’s decisions, how A.I. can become ethical here.

WebProNews is an iEntry Publication

WebProNews is an iEntry Publication